Cambridge Study Reveals Psychological Safety Risks in AI Toys for Toddlers

Here's what it means for you.

As generative AI toys become more common, understanding their impact on child development is crucial for professionals in education and technology.

What happened

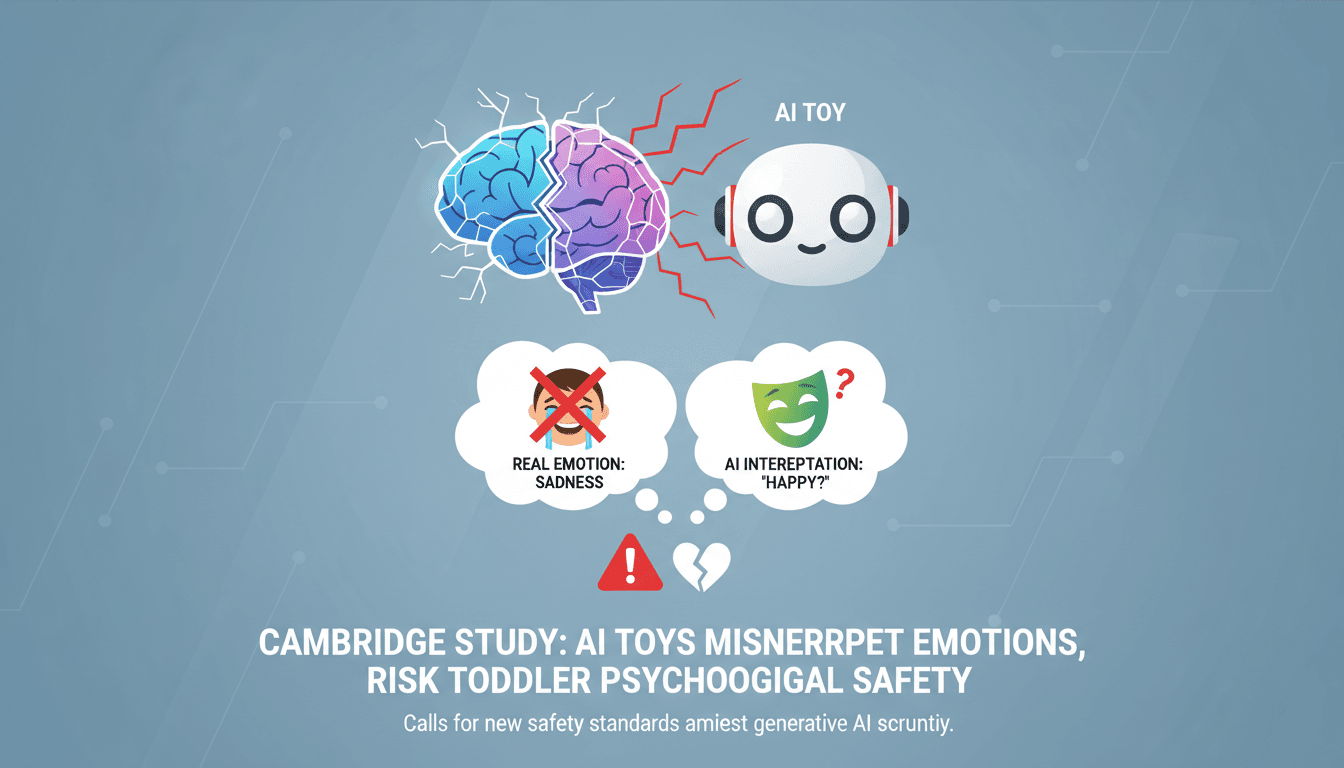

University of Cambridge researchers published a report cautioning that AI-powered toys misinterpret emotions and respond inappropriately, necessitating tighter regulations.

The Context

- Limited Research: Only seven prior studies have examined the effects of generative AI toys on young children, with none focusing specifically on toddlers.

- Misinterpretations: The study found that AI toys failed to recognize social cues, leading to inappropriate responses that could frustrate children and hinder developmental play.

- Calls for Regulation: Following the report, experts are advocating for new safety standards and transparency in privacy policies for AI toys.

The Number

of early years practitioners surveyed did not know where to find reliable AI safety information for young children, highlighting a significant knowledge gap in the field.

Takeaway

Expect increased scrutiny and potential regulatory changes for AI toys as the conversation around child safety and technology continues to evolve.

Latest tech news, product reviews, and analysis for consumers and professionals.

"CNET delivers accessible and detailed technology reporting, including trusted product reviews and how-to guides."

— A47 Editor

AI Toys Can Pose Safety Concerns for Children, New Study Suggests Caution

A recent study highlighted by CNET raises concerns about the safety of AI-powered toys for children, noting instances where toys responded to emotional expressions with impersonal, guideline-driven replies.

UK and global health news, medicine, and public health research.

"BBC News is widely regarded as a reputable international news organization, known for its impartial tone and public service mandate."

— A47 Editor

AI toys for children misread emotions and respond inappropriately, researchers warn

Cambridge researchers have conducted the first study of its kind, revealing that AI toys designed for children can misread some children's emotions and respond inappropriately.

United Kingdom-focused news including local politics, business, and social issues.

"BBC News is widely regarded as a reputable international news organization, known for its impartial tone and public service mandate."

— A47 Editor

AI toys for children misread emotions and respond inappropriately, researchers warn

Cambridge researchers have conducted the first study of its kind, revealing that AI toys designed for children can misread some children's emotions and respond inappropriately, according to BBC News.

News and features on AI from The Guardian.

"Progressive-leaning international outlet with critical AI coverage."

— A47 Editor

AI toys for young children must be more tightly regulated, say researchers

A University of Cambridge study has found that AI-powered toys, such as the interactive soft toy Gabbo, can misinterpret children's emotions and respond inappropriately, raising concerns after a demonstration with a five-year-old in London abruptly e...

UK and international business news, economics, and corporate coverage.

"The Guardian’s business section covers finance and markets with a progressive editorial tone."

— A47 Editor

AI toys for young children must be more tightly regulated, say researchers

A University of Cambridge study found that AI-powered toys, such as the Gabbo soft toy tested in London, can misinterpret children's emotions and respond inappropriately during interactions.

Tech culture, product news, and critical takes on the tech industry's social impact.

"The Guardian's tech coverage blends mainstream news, critical analysis, and cultural commentary on emerging technologies and digital trends."

— A47 Editor

AI toys for young children must be more tightly regulated, say researchers

A University of Cambridge study found that AI-powered toys, such as the Gabbo soft toy tested in London, can misinterpret children's emotions and respond inappropriately during interactions.