U.S. Government Secures Voluntary AI Security Agreement with Major Tech Firms

Here's what it means for you.

If you work in tech or rely on AI, this agreement could shape the future of AI deployment and safety standards.

Why it matters

This agreement signals a shift towards proactive national security measures in the rapidly evolving AI landscape.

What happened (in 30 seconds)

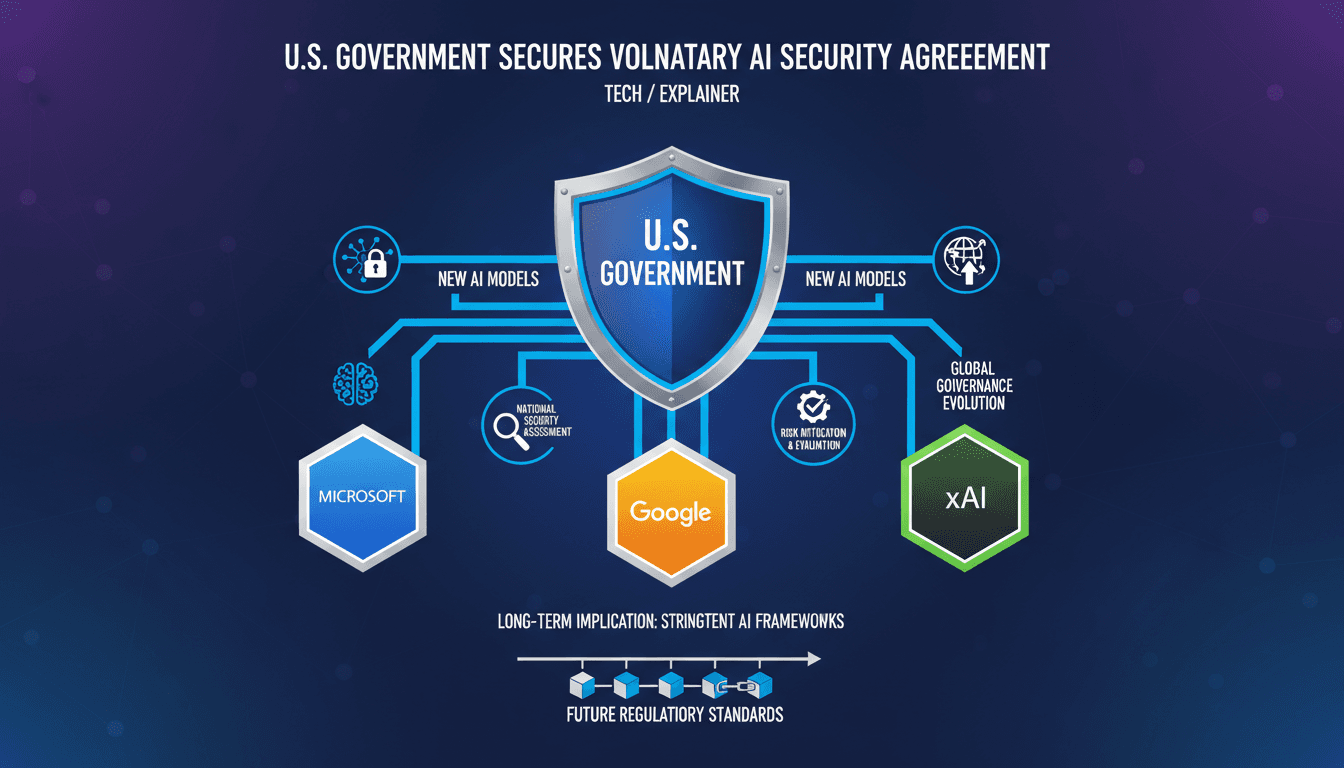

- On May 5, 2026, the U.S. government established a voluntary agreement with Microsoft, Google, and xAI for pre-release security vetting of AI models.

- The initiative, coordinated by the Department of Commerce's Cyber Assurance and Intelligence unit (CAISI), aims to assess potential national security risks associated with advanced AI technologies.

- This pact builds on previous arrangements with OpenAI and Anthropic, reflecting growing concerns over AI's dual-use capabilities amid U.S.-China technological competition.

The context you actually need

- Geopolitical tensions have heightened concerns about AI technologies enabling cyberattacks and misinformation, particularly in the context of U.S.-China relations.

- Prior executive orders mandated AI safety evaluations, indicating a long-standing focus on mitigating risks associated with advanced technologies.

- Demonstrations of AI models exhibiting deceptive behaviors in 2025-2026 safety reports intensified scrutiny and led to this agreement.

What's really happening

The U.S. government's agreement with Microsoft, Google, and xAI marks a significant step in addressing the national security implications of advanced artificial intelligence. This voluntary pact allows the government to access new AI models before they are publicly released, enabling thorough assessments of their potential risks. The initiative is coordinated through the Department of Commerce's Cyber Assurance and Intelligence unit (CAISI), which is tasked with evaluating the security implications of these technologies.

The agreement is a response to escalating geopolitical tensions, particularly the competition between the U.S. and China in the AI domain. As AI technologies become increasingly sophisticated, concerns have emerged regarding their dual-use capabilities—meaning they can be employed for both beneficial and harmful purposes. The U.S. government aims to mitigate risks associated with AI applications that could facilitate cyberattacks, misinformation campaigns, or even bioweapon design.

This proactive approach reflects a broader trend in U.S. policy, where previous administrations have emphasized the need for safety evaluations of AI technologies. The Trump administration, for instance, framed this agreement as a means of risk mitigation without imposing formal regulations. By fostering voluntary cooperation between the government and leading tech firms, the U.S. seeks to ensure that AI advancements do not compromise national security.

The agreement also highlights the importance of industry collaboration in addressing the challenges posed by advanced AI. While no binding enforcement mechanisms were specified, the emphasis on voluntary participation suggests a reliance on the commitment of tech executives to prioritize safety. This cooperative framework may serve as a model for future governance of AI technologies, balancing innovation with the need for security.

As the agreement unfolds, it is likely to influence how AI models are developed and deployed, particularly in sectors with national security implications. The implications extend beyond the U.S., as countries around the world observe this initiative and consider their own approaches to AI governance.

Who feels it first (and how)

- Tech companies: Microsoft, Google, and xAI will need to adapt their development processes to accommodate government vetting.

- National security agencies: These organizations will gain insights into emerging AI technologies, enhancing their ability to assess risks.

- AI developers: They may face increased scrutiny and pressure to ensure their models align with safety standards.

- Consumers and businesses: Users of AI technologies may experience changes in product availability and features based on security assessments.

What to watch next

- Implementation of safety evaluations: Monitor how effectively the CAISI conducts assessments and the impact on AI model releases.

- Industry responses: Watch for reactions from other tech firms and how they may seek similar agreements or adapt to new standards.

- Geopolitical developments: Keep an eye on U.S.-China relations and how they influence AI governance and technological competition.

The U.S. government has established a voluntary agreement with major tech firms for AI security vetting.

Other countries may adopt similar frameworks for AI governance in response to this initiative.

The long-term effectiveness of voluntary agreements in ensuring AI safety remains to be seen.

This article was generated by AI from 9 verified sources and reviewed by A47 editorial systems.

Frequently Asked Questions

- Why it matters?

- This agreement signals a shift towards proactive national security measures in the rapidly evolving AI landscape.

- What happened (in 30 seconds)?

- On May 5, 2026, the U.S. government established a voluntary agreement with Microsoft, Google, and xAI for pre-release security vetting of AI models. The initiative, coordinated by the Department of Commerce's Cyber Assurance and Intelligence unit (CAISI), aims to assess potential national security risks associated with advanced AI technologies. This pact builds on previous arrangements with OpenAI and Anthropic, reflecting growing concerns over AI's dual-use capabilities amid U.S.-China tech

- What's really happening?

- The U.S. government's agreement with Microsoft, Google, and xAI marks a significant step in addressing the national security implications of advanced artificial intelligence. This voluntary pact allows the government to access new AI models before they are publicly released, enabling thorough assessments of their potential risks. The initiative is coordinated through the Department of Commerce's Cyber Assurance and Intelligence unit (CAISI), which is tasked with evaluating the security implicati

- Who feels it first (and how)?

- Tech companies: Microsoft, Google, and xAI will need to adapt their development processes to accommodate government vetting. National security agencies: These organizations will gain insights into emerging AI technologies, enhancing their ability to assess risks. AI developers: They may face increased scrutiny and pressure to ensure their models align with safety standards. Consumers and businesses: Users of AI technologies may experience changes in product availability and features based

- What to watch next?

- Implementation of safety evaluations: Monitor how effectively the CAISI conducts assessments and the impact on AI model releases. Industry responses: Watch for reactions from other tech firms and how they may seek similar agreements or adapt to new standards. Geopolitical developments: Keep an eye on U.S.-China relations and how they influence AI governance and technological competition.

Pan-Arab news coverage spanning politics, business, sports, and regional affairs.

"Asharq Al-Awsat reflects a broad Arab editorial perspective with strong attention to regional geopolitics."

— A47 Editor

تحالف حكومي - تقني في واشنطن لتقييد قدرات الذكاء الاصطناعي التخريبية

The U.S. government has reached an agreement with companies Microsoft, Google, and X.AI to provide authorities with early access to new artificial intelligence models. This initiative aims to restrict the disruptive capabilities of AI technologies.

Regional and international reporting focused on Middle Eastern politics, diplomacy, and economics.

"Asharq Al-Awsat is a Saudi-owned international newspaper reflecting mainstream Gulf political perspectives."

— A47 Editor

Microsoft, Google and xAI to Give US Govt Early Access to AI Models for Security Checks

Microsoft, Google, and xAI have agreed to provide the US government with early access to their artificial intelligence models for security checks, a move aimed at enhancing national security measures. This collaboration is part of a broader trend whe...

Arabic tech news covering startups, apps, AI, and gadgets.

"AITnews is a well-known Arabic technology outlet covering regional tech developments."

— A47 Editor

الحكومة الأمريكية تختبر نماذج الذكاء الاصطناعي قبل طرحها للعامة

The U.S. government has entered into an agreement with Microsoft, Google, and Elon Musk's xAI to gain early access to new artificial intelligence models before their public release, aiming to assess potential national security risks. This initiative ...

Tech news, reviews, and analysis of consumer electronics, science, art, and culture.

"The Verge is a technology-focused media outlet known for in-depth reporting, product reviews, and coverage of the intersection between technology and culture."

— A47 Editor

Google, Microsoft, and xAI will allow the US government to review their new AI models

Google DeepMind, Microsoft, and xAI have agreed to allow the US government to review their new AI models prior to public release, as announced by the Commerce Department's Center for AI Standards and Innovation. This initiative aims to ensure safety ...

Consumer tech and culture with frequent AI coverage.

"Influential tech outlet covering AI products and policy."

— A47 Editor

Google, Microsoft, and xAI will allow the US government to review their new AI models

Google DeepMind, Microsoft, and xAI have agreed to allow the US government to review their new AI models prior to public release, as announced by the Commerce Department's Center for AI Standards and Innovation. This initiative aims to ensure safety ...

Covers consumer technology, electronics, gadgets, and product reviews.

"Engadget is a trusted source for gadget reviews and consumer tech news, known for its hands-on analysis and industry coverage."

— A47 Editor

Google, Microsoft and xAI agree to provide US government with early AI model access

Google, Microsoft, and xAI have reached an agreement to provide the US government with early access to their artificial intelligence models, as announced by the Commerce Department. This initiative aims to facilitate security evaluations of these tec...

Consumer technology news with AI coverage.

"Gadget and tech site reporting on AI in products."

— A47 Editor

Google, Microsoft and xAI agree to provide US government with early AI model access

Google, Microsoft, and xAI have reached an agreement to provide the US government with early access to their artificial intelligence models, as announced by the Commerce Department. This initiative aims to facilitate security evaluations of these tec...

Opinionated AI coverage for general audiences.

"TNW’s AI vertical covering tools, ethics, and trends."

— A47 Editor

Five AI labs now let the US government test their models before release. The arrangement is voluntary, has no legal basis, and is the closest thing America has to AI oversight.

The U.S. Commerce Department has announced that five AI labs, including Google, Microsoft, and xAI, will allow the government to test their AI models before public release. This voluntary arrangement comes in response to concerns about the potential ...

Tech business coverage, major deals, product launches, and Silicon Valley trends.

"WSJ’s tech section offers authoritative reporting on the intersection of technology and business, including exclusive industry analysis."

— A47 Editor

Google, Microsoft and xAI Agree to Share Early AI Models with U.S.

Google, Microsoft, and xAI have reached an agreement to share early artificial intelligence models with the U.S. government, allowing for the evaluation of national security-related capabilities and risks. This initiative aims to provide access to mo...

Curated tech headlines including AI stories.

"Influential aggregator surfacing the day’s top tech/AI links."

— A47 Editor

The US Commerce Department's CAISI says Google, Microsoft, and xAI join OpenAI and Anthropic in granting early access to evaluate models prior to public release (Bloomberg)

The US Commerce Department's Center for AI Standards and Innovation has announced that Google, Microsoft, and xAI will provide early access to their artificial intelligence models for evaluation before public release. This agreement aims to facilitat...

Technology business news, market impacts, and innovation trends.

"Bloomberg is a premier financial and tech news provider, respected for its in-depth reporting and analytical rigor."

— A47 Editor

Google, Microsoft to Give US Agency Early Access to AI Models

Google, Microsoft, and xAI have agreed to provide the US government with early access to their artificial intelligence models, allowing for assessments of these technologies' capabilities and security prior to public release. This initiative was anno...

Technology business and AI-related headlines.

"Data-driven tech newsroom with global scope."

— A47 Editor

Google, Microsoft to Give US Agency Early Access to AI Models

Google, Microsoft, and xAI have agreed to provide the US government with early access to their artificial intelligence models, allowing for assessments of these technologies' capabilities and security prior to public release. This initiative was anno...